The Machine Is Building Itself

Recursive Self-Improvement Just Broke Out of Software and Into the Physical World

Machines building machines… recursive self-improvement on steroids… the acceleration of the acceleration – call it what you like, this is the greatest driver of abundance this decade.

Three stories broke this week that, when you connect the dots, paint a picture of an audacious—almost unfathomable—future.

Whether this scares you or excites you (and I do hope it is the latter), it’s happening and we all need to prepare “to Surf the Supersonic Tsunami… rather than be crushed by it.”

Let me walk you through what happened, why it matters, and what you need to do about it.

Story 1: The Factory That Builds the Future: Elon’s Terafab

First Elon asks all the chip manufacturers, “Can you please make more chips, a lot more chips… I will pay you for as much production as you can give me. I don’t want to compete with you—I want to buy everything you make.” And when they couldn’t move fast enough, he said, “Fine. I’ll build my own.”

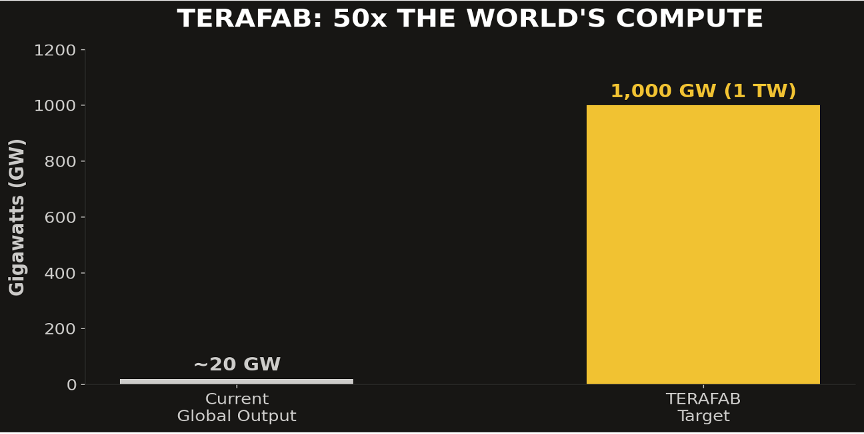

Perhaps the story of the decade, and in my opinion at least the story of 2026 (so far) is Elon’s announcement of the TeraFab: his effort in MuskWorld (Tesla/xAI/ SpaceX) to build 1 Terawatt (1 TW) of AI compute per year. To put that in context, the current global output of AI compute is about 20 gigawatts.

Elon’s goal is to build 50 times the current production rate of the entire planet.

Not 50% more. Fifty times more. And he’s not waiting for TSMC or Samsung or Intel to catch up. He’s building it himself in Austin with full vertical integration under one roof (100 million sq ft… Wow! The audacity of this guy!!)

This is Elon’s Playbook repeated:

1. Don’t depend on suppliers (they don’t move fast enough).

2. Vertically integrate, own your own destiny

3. Rapidly iterate

This is the exact playbook he ran in the launch industry. SpaceX lapped the entire existing launch industry by orders of magnitude. Then he did it again with electric cars. Now he’s doing it with chips.

But here’s the kicker: the Terafab is not just about scale. It’s about speed. Elon’s building this facility specifically to enable rapid iteration on chip design. Full vertical integration means he can run experiments, fail fast, and iterate in real time.

And remember that Elon’s also building Super Intelligence with Grok… so who do you think will design Terafab and his AI chips? Humans? Likely SuperGrok… cranking out new chip designs daily, testing them, iterating, improving. That’s the machine building itself. Literally.

5 Major Implications of Elon’s Vision?

1/ The chip bottleneck is over and everything changes when it is.

For the last decade, AI progress has been throttled by one thing: compute. Not ideas. Not talent. Not capital. Compute. Terafab doesn’t just ease that constraint… it obliterates it. When you go from 20 gigawatts to 1 terawatt, you’re not uncorking a bottle. You’re removing the bottle entirely. Every AI lab, every startup, every researcher on the planet gets access to orders of magnitude more processing power. The models that follow will make GPT-4 look like a pocket calculator.

2/ This is the first real shot at AI-designed AI – at industrial scale.

Terafab makes recursive self-improvement operational. SuperGrok designing chips. Those chips training better versions of SuperGrok. Better versions of SuperGrok designing better chips. Rinse and repeat. hourly. This isn’t a research paper. And it’s not a thought experiment. This is a factory being built in Austin starting now.

3/ Vertical integration is the new moat and Elon just dug the deepest one in history.

SpaceX won the commercial space-race by owning the entire stack: design, manufacturing, iteration, launch. Tesla didn’t win by making prettier cars (though I do love the designs!). It won by owning the battery, the software, the charging network. MuskWorld is now applying that exact playbook to the most valuable commodity on Earth: AI compute. The companies that don’t own their full-stack are going to find themselves at the mercy of those who do!

4/ The geopolitical order is being redrawn, and Silicon is the new oil.

Taiwan produces over 90% of the world’s advanced chips. That single fact has held US foreign policy hostage for years. Terafab is Elon’s bet that America doesn’t have to live with that vulnerability. If (or when) he pulls it off, the US achieves chip independence, and Taiwan stops being the most dangerous flashpoint on Earth (hopefully, just in time!). That’s not just an economic story. That’s a peace story.

5/ We are witnessing the birth of the first $100 trillion ecosystem

NVIDIA at $4.25 trillion feels surreal. Apple at $3 trillion felt impossible a decade ago. But those numbers are built on selling into a world constrained by today’s compute. MuskWorld isn’t playing that game. Tesla, SpaceX, xAI, and Terafab together form a closed-loop economy of energy, intelligence, transportation, and compute. If Terafab delivers even a fraction of what Elon is promising, we’re not talking about the world’s most valuable company. We’re talking about a financial valuation category that doesn’t exist yet.

Let that sink in…

Story 2: The 12-Hour Chip: When AI Designs Its Own Hardware

Here’s the second development that caught my attention, telling the story of super Abundance… a company called Verkor AI launched something called “Design Conductor”: an AI agent that autonomously designed a complete 1.5 gigahertz, Linux-capable RISC-V CPU from concept to tape-out in twelve hours.

Let me repeat that: Twelve hours. Start to finish. A traditional engineering team would have taken ninety days to do the same work.

That's not a 7x improvement. That's a 180x compression of the engineering cycle.

What does this mean? Here’s the top 4 implications as I see it:

1/ The 90-day engineering cycle is dead… every industry that depends on custom hardware just got a new superpower.

Twelve hours from concept to tape-out isn’t an incremental improvement — it’s a category collapse. The quarterly chip design cycle has been the invisible ceiling on hardware innovation for decades. Medical devices, robotics, aerospace, defense — every industry that’s been waiting 18 months for purpose-built silicon is about to find out what happens when that wait drops to an afternoon. The bottleneck wasn’t ideas. It was time. That excuse is gone.

2/ We are entering the era of disposable chip designs.

When you can design a chip in twelve hours, you stop thinking about chips as fixed infrastructure and start thinking about them as consumable assets. Need a chip optimized specifically for protein folding simulations? Done by lunch. Need one tuned for real-time surgical robotics? Done by dinner.

3/ This is recursive self-improvement leaving the software layer.

We spent the last five years watching AI optimize code. Now it’s optimizing the chips that run the code. Next it will optimize the data centers those chips sit in. Then the energy systems powering those data centers. Then the supply chains feeding the whole stack. This isn’t a chip story. This is the opening move in AI redesigning the entire physical economy from the inside out.

4/ 50,000 hardware engineers aren’t becoming obsolete; they’re becoming force multipliers.

So, what happens when 50,000 world-class hardware engineers stop spending 90% of their time on grunt work and start spending 100% of their time on imagination? You don’t get fewer chips. You get an explosion of them — each one purpose-built, hyper-efficient, and targeted at problems we couldn’t previously afford to solve. This isn’t job destruction. It’s the single greatest amplification of human engineering talent in history.

Story 3: The Ghost in the Machine: When Anonymous Beats Branded

Now for the third story, and this one’s wild.

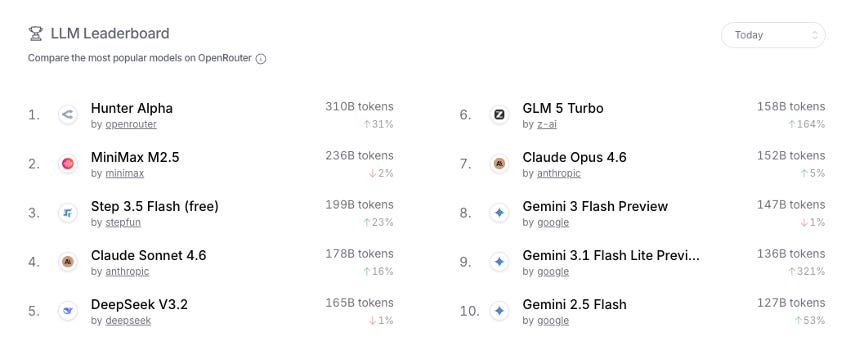

A mystery model appeared on OpenRouter this week. No attribution. No press release. No origin story. Just a one-trillion-parameter model called “Hunter Alpha” with a million-token context window, running completely free.

It processed over 160 billion tokens before anyone figured out who built it. Everyone assumed it was DeepSeek v4: the Chinese lab that’s been making waves. But it turned out to be Xiaomi’s AI team.

When that was revealed, Xiaomi’s stock jumped 5.8% in a single day.

Think about what just happened: A company launched a frontier-class AI model anonymously, and it gained massive traction purely on merit. No brand. No marketing. No hype cycle. Just performance.

This is the future. Models are becoming commodities. The traditional moats—brand, capitalization, who you are—are evaporating. New players are rushing in with competitive models, and users are increasingly agnostic about which model they use.

So, what are the implications of this story? Here’s my Top 3:

1/ The brand moat in AI is dying and most companies haven’t figured that out yet.

A trillion-parameter model ran free and anonymous on the internet for long enough to process 160 billion tokens before anyone even knew who built it.

Nobody cared it wasn’t OpenAI. Nobody cared it wasn’t Google. They used it because it *worked*. That’s the signal. We are entering a world where AI capability is table stakes: abundant, cheap, and increasingly unbranded.

The companies still betting their strategy on “we have the best model” are building on sand. The model is not the moat. Read this many times until it sinks in!

2/ A $50 million AI lab is no longer science fiction, it’s Tuesday.

Frontier-class, trillion-parameter models are going to propagate all over the world for anyone willing to invest $50 to $100 million. And that number is falling fast. Algorithmic efficiency, distillation, and the fact that every prior model is essentially a roadmap for building the next one — these forces are compressing the cost of intelligence at a rate that should terrify incumbents and electrify entrepreneurs. The barriers that protected the big labs are evaporating in real time. This is not a gradual shift. This is an avalanche.

3/ The new gold rush isn’t models. It’s proprietary data, and the window to stake your claim is closing.

If the model is a commodity, then the only thing that can’t be replicated is what trained it. Medical scan repositories. Materials science datasets. Chemistry simulation outputs. Genomic databases. The organizations quietly sitting on mountains of domain-specific, real-world data are about to discover they’re holding the most valuable asset in the AI economy — whether they know it or not. The playbook is simple but brutal: proprietary data plus commodity models equals durable competitive advantage. Everyone who doesn’t own unique data is going to be racing on a treadmill they can never get off. The time to go build — or acquire — that data moat is right now.

“This is the playbook: proprietary data plus commodity models equals competitive advantage.”

What You Need to Do

Okay, so the machine is building itself. Recursive self-improvement has escaped software. AI is designing chips, building fabs, and launching models anonymously. What do you do about it?

If you’re an entrepreneur: Stop competing on generic software features. Start accumulating proprietary data in your domain. The models are becoming commodities, your data isn’t. Find the bottleneck in your industry and build the vertical integration to iterate faster than anyone else. Elon’s showing you the playbook: don’t wait for suppliers to catch up. Build it yourself.

If you’re a student: Replay Alex Wissner-Gross’ explanation of distillation ten times until you fully understand it. Then ask your favorite AI to generalize on it and find every document you can around the internet to read. At the end of that process, you’ll be able to build a distilled, focused model that solves some problem better than anyone else on the planet. That’s instant value. That’s instant employability. That’s your ticket to the future.

If you’re a parent: Your kids need to understand that the future belongs to people who can leverage AI, not compete with it. The engineers aren’t going away. In fact, they’re being multiplied. But the ones who thrive will be the ones who use AI to design ten chips instead of one. Teach your kids to think in terms of leveraging superintelligence, not fighting it.

If you’re an investor:

Look for the innermost loop. Alex has been beating this drum for months, and he’s right. The tailwinds in equities and assets are like nothing we’ve ever seen, but you have to be in the AI loop to be relevant. Go to 13f.info, look up Leopold Aschenbrenner’s Situational Awareness Fund, and study his holdings. He’s buying chip fabs, power infrastructure, chip design companies, algorithmic plays: anything directly in the centerpiece of the innermost loop. That’s your roadmap.

Here’s my final thought: The singularity isn’t waiting. The machine is building itself right now: faster than most people realize, faster than most institutions can adapt.

We’re watching the loop close in real time. AI designing chips. Those chips powering better AI. That better AI designing better chips. And the cycle accelerates.

The question is not whether this is happening. The question is whether you’re positioned to benefit from it or be disrupted by it.

Choose wisely. The future is being built this week.

— Peter

More From Peter

If you’ve enjoyed Metatrends, here are more ways to stay connected:

I love these insights but they read like they are all written by AI. For example “aren’t becoming obsolete; they’re becoming force multipliers.”

Do you label content written with AI and is there any way for us to tell what is Peter’s voice and what is just Peter prompted?

This feels like a bet on everything going right at the same time.

But I think the more interesting part is why that’s even necessary.

AI isn’t just a software story anymore. It’s running into physical limits. Chips, energy, infrastructure.

When you start hearing about rebuilding the entire system instead of improving it, it usually means the current one can’t support what’s coming next, and that’s the part worth paying attention to.